I’m use object storage as the primary storage, and during using I met a problem. When the uploaded file is large(the test file size is 5GB), it can’t transfer from my server to object storage server.

tflidd

October 25, 2020, 4:07pm

2

Some user found a workaround for an older version and a different storage:

I could resolve this issue as well by patching /lib/private/Files/ObjectStore/S3ObjectTrait.php and lowering S3_UPLOAD_PART_SIZE from 512MB to 256MB.

It might make sense to make this a configuration option? I guess the optimal size here is different depending on the S3 backend used? (e.g., I’m not using AWS S3 but DigitalOcean Spaces)

There was a bug report last year as well:

opened 12:48PM - 30 Oct 19 UTC

closed 01:37AM - 01 Nov 19 UTC

bug

0. Needs triage

### Preface

I understand S3 as a primary storage is still technically considere… d an enterprise feature. I have a specific use case for this and it is for personal use.

I'm also fairly confident this behaviour can be replicated on a S3 external storage, so it's not just primary that's the problem.

### Steps to reproduce

1. Upload large file via webui

2. Upload fails

3. Check sql database

### Expected behaviour

1. File is uploaded successfully

2. File is written properly to bucket.

3. SQL database is updated to show that the file exists.

### Actual behaviour

1. Upload fails

2. Chunks written to bucket

3. No metadata is written to database that shows the file exists.

### Infrastructure changes

I changed cephs min part size to 2M (the minimum allowed) and the problem persists.

### Possible workarounds

Disabling multi-part uploads, which appears to not be possible in the current release.

### Server configuration

Nginx

PHP7.3-FPM

Latest ceph with RadosGW (civetweb frontend)

Nextcloud 17

S3 primary

MariaDB

**Operating system**:

Debian 10

**Updated from an older Nextcloud/ownCloud or fresh install:**

Fresh install

**Where did you install Nextcloud from:**

Github/source

**Are you using encryption:** yes/no

No

**Are you using an external user-backend, if yes which one:** LDAP/ActiveDirectory/Webdav/...

No

### Errors

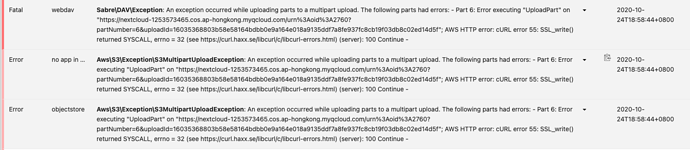

S3 upload - "AWS HTTP error: cURL error 55: SSL_write() returned SYSCALL, errno = 32 (see http://curl.haxx.se/libcurl/c/libcurl-errors.html)"

"An exception occurred while uploading parts to a multipart upload."

### Rclone via webdav errors

root@cl-01:/mnt/ceph/paradox/gamelibrary# rclone copy youtube.mkv webdav:/ --progress

2019-10-30 05:55:32 ERROR : youtube.mkv: Failed to copy: Put https://drive.ir12512.com/remote.php/webdav/youtube.mkv: stream error: stream ID 1; PROTOCOL_ERROR

2019-10-30 05:55:32 ERROR : Attempt 1/3 failed with 3 errors and: Put https://asgascom/remote.php/webdav/youtube.mkv: stream error: stream ID 1; PROTOCOL_ERROR

couple of experiences from dealing with this issue:

check if the s3 bucket is using ipv4/6 dualstack, in which case connecting via ipv6 might be slower with a much bigger latency

in Nextcloud 21.0.2, there are three parameters

that you can play with inside

to adjust the nextcloud upload chunking to resolve the latency issue

As I had this issue as well just now, I thought to share the solution that worked for me:

I had the same problem, but with my Nextcloud version (23) I had to change the uploadPartSize in a different file.

I modified:

/var/www/nextcloud/lib/private/Files/ObjectStore/S3ConnectionTrait.php

And changed the numerical value in this line:

$this->uploadPartSize = $params['uploadPartSize'] ?? 104857600;

Here I set the value to 104857600 which is 100MB. (250MB did not solve it for me)

I got the inspiration to set it to 100MB thanks to this comment: S3 default upload part_…