Hello ![]()

I am a newbie to the use and implementation of Nextcloud-AIO. Very very nice !

I made use of the All-in-One VM image,

no proxy and setup with daily backup & automatic updates.

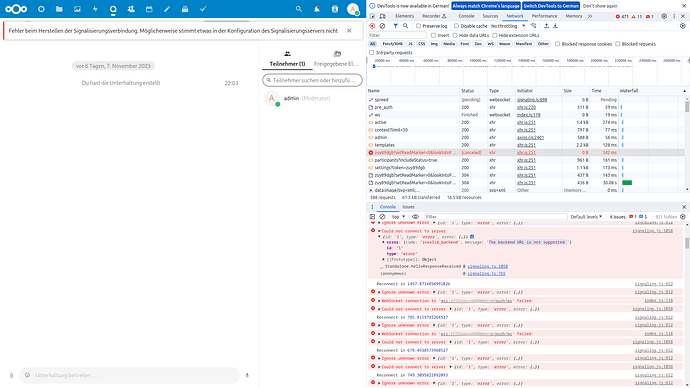

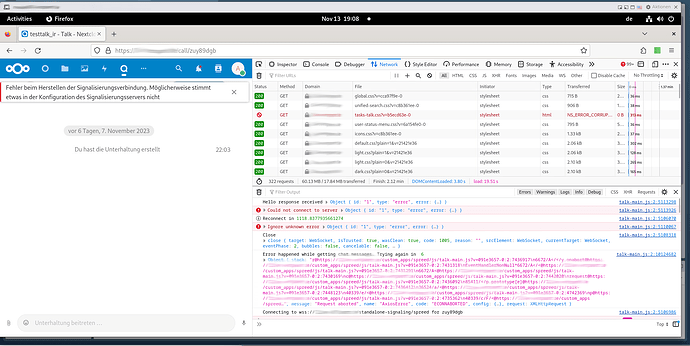

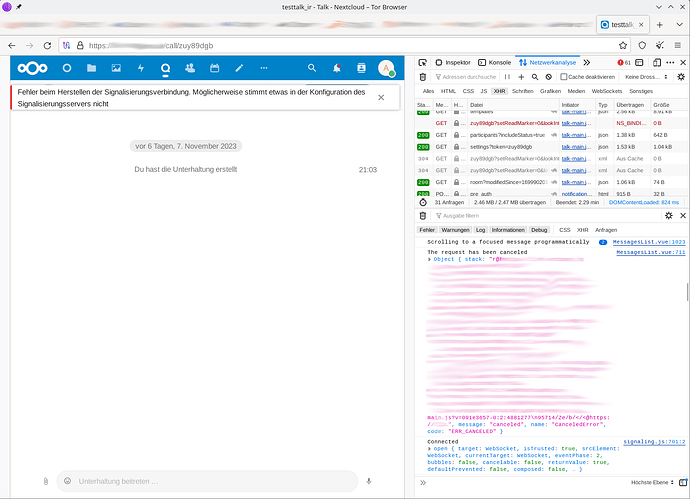

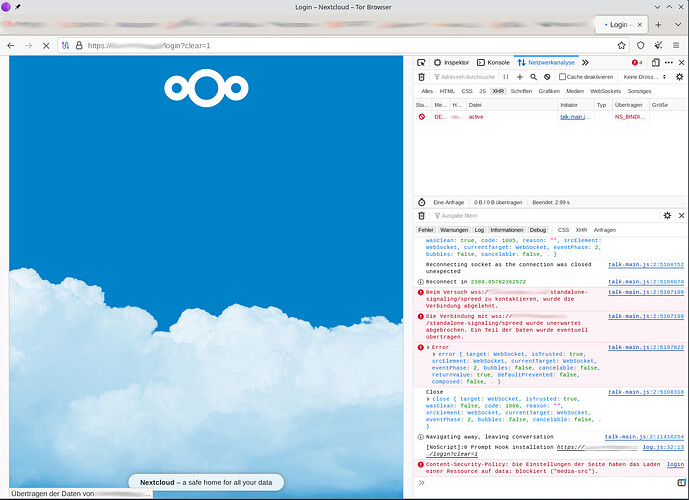

Unfortunately, a constant error occurs regarding Talk, that it cannot connect to server:

Der Aufbau der Signaling-Verbindung dauert länger als erwartet…

Fehler beim Herstellen der Signalisierungsverbindung. Möglicherweise stimmt etwas in der Konfiguration des Signalisierungsservers nicht

@AIO-Interface:

Talk (Running)

Talk Recording (Running)

@Administration

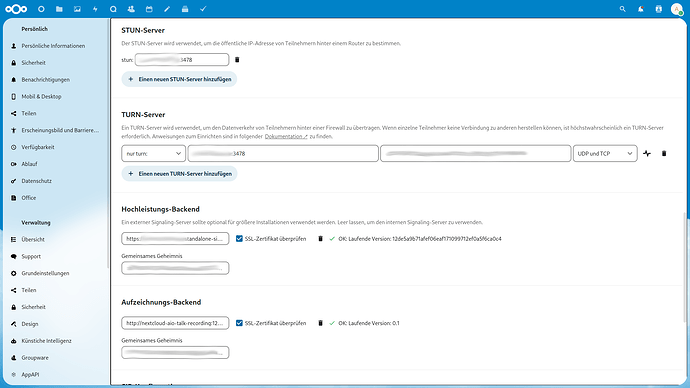

Talk Settings:

- STUN-Server

- TURN-Server

- Hochleistungs-Backend

- Aufzeichnungs-Backend show no failure

Result of

| $ curl -vvv https://[DOMAIN.COM]:443/standalone-signaling/api/v1/welcome |

|---|

| * Trying [SERVERIP]:443… |

| * Connected to [DOMAIN.COM] ([SERVERIP]) port 443 |

| * ALPN: curl offers h2,http/1.1 |

| * TLSv1.3 (OUT), TLS handshake, Client hello (1): |

| * CAfile: /etc/ssl/certs/ca-certificates.crt |

| * CApath: none |

| * TLSv1.3 (IN), TLS handshake, Server hello (2): |

| * TLSv1.3 (IN), TLS handshake, Encrypted Extensions (8): |

| * TLSv1.3 (IN), TLS handshake, Certificate (11): |

| * TLSv1.3 (IN), TLS handshake, CERT verify (15): |

| * TLSv1.3 (IN), TLS handshake, Finished (20): |

| * TLSv1.3 (OUT), TLS change cipher, Change cipher spec (1): |

| * TLSv1.3 (OUT), TLS handshake, Finished (20): |

| * SSL connection using TLSv1.3 / TLS_AES_128_GCM_SHA256 |

| * ALPN: server accepted h2 |

| * Server certificate: |

| * subject: CN=[DOMAIN.COM] |

| * start date: Oct 24 17:56:12 2023 GMT |

| * expire date: Jan 22 17:56:11 2024 GMT |

| * subjectAltName: host “[DOMAIN.COM]” matched cert’s “[DOMAIN.COM]” |

| * issuer: C=US; O=Let’s Encrypt; CN=R3 |

| * SSL certificate verify ok. |

| * TLSv1.3 (IN), TLS handshake, Newsession Ticket (4): |

| * using HTTP/2 |

| * [HTTP/2] [1] OPENED stream for https://[DOMAIN.COM]:443/standalone-signaling/api/v1/welcome |

| * [HTTP/2] [1] [:method: GET] |

| * [HTTP/2] [1] [:scheme: https] |

| * [HTTP/2] [1] [:authority: [DOMAIN.COM]] |

| * [HTTP/2] [1] [:path: /standalone-signaling/api/v1/welcome] |

| * [HTTP/2] [1] [user-agent: curl/8.4.0] |

| * [HTTP/2] [1] [accept: */*] |

| > GET /standalone-signaling/api/v1/welcome HTTP/2 |

| > Host: [DOMAIN.COM] |

| > User-Agent: curl/8.4.0 |

| > Accept: */* |

| > |

| < HTTP/2 200 |

| < alt-svc: h3=“:443”; ma=2592000 |

| < content-type: application/json; charset=utf-8 |

| < date: Mon, 13 Nov 2023 11:57:02 GMT |

| < server: Caddy |

| < server: nextcloud-spreed-signaling/12de5a9b71afef06eaf171099712ef0a5f6ca0c4 |

| < x-spreed-signaling-features: audio-video-permissions, hello-v2, incall-all, mcu, simulcast, switchto, transient-data, update-sdp, welcome |

| < content-length: 94 |

| < |

| {“nextcloud-spreed-signaling”:“Welcome”,“version”:“12de5a9b71afef06eaf171099712ef0a5f6ca0c4”} |

| * Connection #0 to host [DOMAIN.COM] left intact |

Log of the nextcloud-aio-talk container:

| https://[DOMAIN.COM]:8443/api/docker/logs?id=nextcloud-aio-talk |

|---|

| ++ dig nextcloud-aio-talk IN A +short ++ grep ‘[1]+$’ |

| ++ head -n1 |

| ++ sort |

| IPv4_ADDRESS_TALK=172.18.0.3 ++ dig nextcloud-aio-talk AAAA +short ++ grep ‘[2]+$’++ ++ sort head -n1 |

| IPv6_ADDRESS_TALK= |

| set +x Exec: /opt/eturnal/erts-14.0.2/bin/erlexec -noinput +Bd -boot /opt/eturnal/releases/1.12.0/start -mode embedded -boot_var SYSTEM_LIB_DIR /opt/eturnal/lib -config /opt/eturnal/releases/1.12.0/sys.config -args_file /opt/eturnal/releases/1.12.0/vm.args -erl_epmd_port 3470 -start_epmd false – foreground Root: /opt/eturnal |

| rnal |

| [27] 2023/11/13 04:02:06.438144 [INF] Starting nats-server |

| [27] 2023/11/13 04:02:06.441151 [INF] Version: 2.10.3 |

| [27] 2023/11/13 04:02:06.441153 [INF] Git: [1528434] |

| [27] 2023/11/13 04:02:06.441155 [INF] Name: NAAJ4IULGZSXDTKSEQJM6OFLGIBKVCM5YHKLQGN62OT27QLADTU4L7GQ |

| [27] 2023/11/13 04:02:06.441160 [INF] ID: NAAJ4IULGZSXDTKSEQJM6OFLGIBKVCM5YHKLQGN62OT27QLADTU4L7GQ |

| [27] 2023/11/13 04:02:06.441167 [INF] Using configuration file: /etc/nats.conf |

| [27] 2023/11/13 04:02:06.453493 [INF] Listening for client connections on 127.0.0.1:4222 |

| [27] 2023/11/13 04:02:06.453501 [INF] Server is ready |

| Janus commit: 22298e847202d7ae6e60a0592130b6f96752789e |

| Compiled on: Fri Oct 27 11:14:22 UTC 2023 |

| Logger plugins folder: /usr/local/lib/janus/loggers |

| Starting Meetecho Janus (WebRTC Server) v0.14.0 |

| Checking command line arguments… |

| Debug/log level is 3 |

| Debug/log timestamps are disabled |

| Debug/log colors are disabled |

| [WARN] Janus is deployed on a private address (172.18.0.3) but you didn’t specify any STUN server! Expect trouble if this is supposed to work over the internet and not just in a LAN… |

| [WARN] libcurl not available, Streaming plugin will not have RTSP support |

| [WARN] libogg not available, Streaming plugin will not have file-based Opus streaming |

| main.go:133: Starting up version 12de5a9b71afef06eaf171099712ef0a5f6ca0c4/go1.20.5 as pid 28 |

| main.go:142: Using a maximum of 3 CPUs |

| natsclient.go:108: Connection established to nats://127.0.0.1:4222 (NAAJ4IULGZSXDTKSEQJM6OFLGIBKVCM5YHKLQGN62OT27QLADTU4L7GQ) |

| grpc_common.go:167: WARNING: No GRPC server certificate and/or key configured, running unencrypted |

| grpc_common.go:169: WARNING: No GRPC CA configured, expecting unencrypted connections |

| backend_storage_static.go:72: Backend backend-1 added for https://[DOMAIN.COM]/ |

| hub.go:200: Using a maximum of 8 concurrent backend connections per host |

| hub.go:207: Using a timeout of 10s for backend connections |

| hub.go:303: Not using GeoIP database |

| main.go:228: Could not initialize janus MCU (dial tcp 127.0.0.1:8188: connect: connection refused) will retry in 1s |

| [WARN] No Unix Sockets server started, giving up… |

| [WARN] The ‘janus.transport.pfunix’ plugin could not be initialized |

| mcu_janus.go:294: Connected to Janus WebRTC Server 0.14.0 by Meetecho s.r.l. |

| mcu_janus.go:300: Found JANUS VideoRoom plugin 0.0.9 by Meetecho s.r.l. |

| mcu_janus.go:305: Data channels are supported |

| mcu_janus.go:309: Full-Trickle is enabled |

| mcu_janus.go:311: Maximum bandwidth 1048576 bits/sec per publishing stream |

| mcu_janus.go:312: Maximum bandwidth 2097152 bits/sec per screensharing stream |

| mcu_janus.go:318: Created Janus session 6821307493612106 |

| mcu_janus.go:325: Created Janus handle 1522041572882229 |

| main.go:263: Using janus MCU |

| hub.go:385: Using a timeout of 10s for MCU requests |

| backend_server.go:111: No IPs configured for the stats endpoint, only allowing access from 127.0.0.1 |

| main.go:339: Listening on 0.0.0.0:8081 |

| client.go:284: Client from [CLIENTIP] has RTT of 151 ms (151.414891ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 112 ms (112.687309ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 94 ms (94.838008ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 107 ms (107.658469ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 409 ms (409.641487ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 213 ms (213.879134ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 426 ms (426.639512ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 283 ms (283.914738ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 122 ms (122.435144ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 218 ms (218.577487ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 372 ms (372.43193ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 18 ms (18.36552ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 16 ms (16.601217ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 18 ms (18.548559ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 18 ms (18.26575ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 18 ms (18.064661ms) |

| client.go:284: Client from [CLIENTIP] has RTT of 17 ms (17.968451ms) |

Where else could I look ? Is there anything I might have missed regarding “All-in-One VM image” and “Talk” ?

Your support would be greatly appreciated.

Best regards, Ive

Using: All-in-One VM image

https://download.nextcloud.com/aio-vm/

Nextcloud Hub 6 (27.1.3)

Nextcloud AIO v7.5.1

Using the integrated

Daily backup and automatic updates