Support intro

Sorry to hear you’re facing problems.

The community help forum (help.nextcloud.com) is for home and non-enterprise users. Support is provided by other community members on a best effort / “as available” basis. All of those responding are volunteering their time to help you.

If you’re using Nextcloud in a business/critical setting, paid and SLA-based support services can be accessed via portal.nextcloud.com where Nextcloud engineers can help ensure your business keeps running smoothly.

Getting help

In order to help you as efficiently (and quickly!) as possible, please fill in as much of the below requested information as you can.

Before clicking submit: Please check if your query is already addressed via the following resources:

- Official documentation (searchable and regularly updated)

- How to topics and FAQs

- Forum search

(Utilizing these existing resources is typically faster. It also helps reduce the load on our generous volunteers while elevating the signal to noise ratio of the forums otherwise arising from the same queries being posted repeatedly).

Nextcloud Server version: Nextcloud Hub 9 (30.0.5)

Operating System: Ubuntu 24.04.1 LTS

Web Server: Apache/2.4.58

PHP version: 8.3.6Nextcloud Server version: Nextcloud Hub 9 (30.0.5)

Operating System: Ubuntu 24.04.1 LTS

Web Server: Apache/2.4.58

PHP version: 8.3.6Nextcloud Server version: Nextcloud Hub 9 (30.0.5)

Operating System: Ubuntu 24.04.1 LTS

Web Server: Apache/2.4.58

PHP version: 8.3.6Nextcloud Server version: Nextcloud Hub 9 (30.0.5)

Operating System: Ubuntu 24.04.1 LTS

Web Server: Apache/2.4.58

PHP version: 8.3.6

Nextcloud Server version: Nextcloud Hub 9 (30.0.16)

Operating System: Ubuntu 24.04.3 LTS

Web Server: Apache/2.4.58

PHP version: 8.3.25

Platform: Raspberry 5, 8Gb RAM

Hello! I installed Nextcloud on Raspberry Pi 5 8Gb RAM.

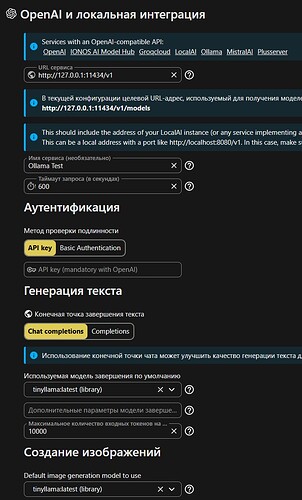

Installed applications: Nextcloud Assistant, Nextcloud Assistant Context Chat, OpenAI and LocalAI integration.

Installed Ollama and ollama models tinyllama.

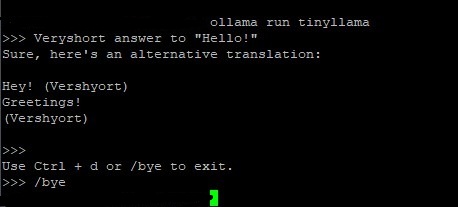

When I launch LLM from the command line, then everything works and I see the load on the CPU:

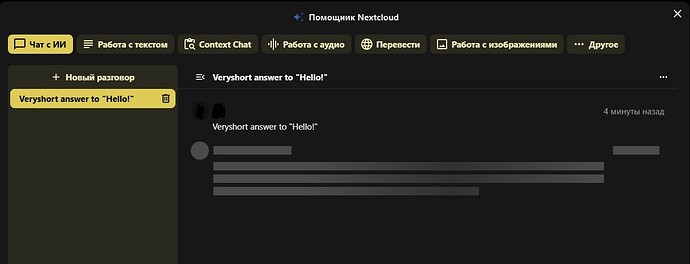

But when I try to check the work of LLM through the assistant, the operation is performed endlessly and the loading of the CPU shows that nothing is done:

The state of the service informs that there is no GPU:

In log Nextcloud at the time of processing messages in the assistant using LLM the next error:

Error while running background job OC\TaskProcessing\SynchronousBackgroundJob (id: 5638, arguments: null)

Can anyone tell me which way to watch to solve this problem?

Thank you!