Alright, doing this now.

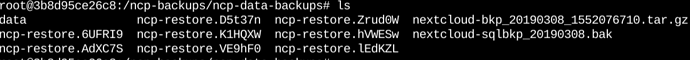

I forgot to mention this before, but there are old ncp-restore.XXXXX folders inside the backups folder from when I tried numerous times before.

They all have varying amounts of progress, but it isn’t much in each one. Some have what I assume to be only the database backup (nextcloud-sqlbkp.XXXXXXX) while others actually have a few of my files in them.

So I’m doing it manually using the command. It’s working, but insanely slow. That’s my issue here, from the looks of it.

I just checked the ncp-restore.6UEFRI9, the one I left on for about four to six hours through ncp-config. There are the most files in there out of any other, equalling 8GB out of around 46GB. I might actually just be a massive idiot here and cancelling it before it can finish. But at the same time, this doesn’t explain why there is little to no CPU usage - why it is going so slowly. I even tried moving the backup off the USB drive to a local folder inside the container, but that produced an entirely new error:

Running nc-restore

Can only restore from ext/btrfs filesystems

Done. Press any key...

I am well confused here.