Support intro

Sorry to hear you’re facing problems.

The community help forum (help.nextcloud.com) is for home and non-enterprise users. Support is provided by other community members on a best effort / “as available” basis. All of those responding are volunteering their time to help you.

If you’re using Nextcloud in a business/critical setting, paid and SLA-based support services can be accessed via portal.nextcloud.com where Nextcloud engineers can help ensure your business keeps running smoothly.

Getting help

In order to help you as efficiently (and quickly!) as possible, please fill in as much of the below requested information as you can.

Before clicking submit: Please check if your query is already addressed via the following resources:

- Official documentation (searchable and regularly updated)

- How to topics and FAQs

- Forum search

(Utilizing these existing resources is typically faster. It also helps reduce the load on our generous volunteers while elevating the signal to noise ratio of the forums otherwise arising from the same queries being posted repeatedly).

Hi Community

The Basics

- Nextcloud Server version (e.g., 29.x.x):

nextcloud 32.0.5

- Operating system and version (e.g., Ubuntu 24.04):

ubuntu24.04

- Web server and version (e.g, Apache 2.4.25):

Apache2.4.58

- PHP version (e.g, 8.3):

8.3-fpm

- Is this the first time you’ve seen this error? (Yes / No):

yes

- Installation method (e.g. AlO, NCP, Bare Metal/Archive, etc.)

- Bare Metal

- Are you using CloudfIare, mod_security, or similar? (Yes / No)

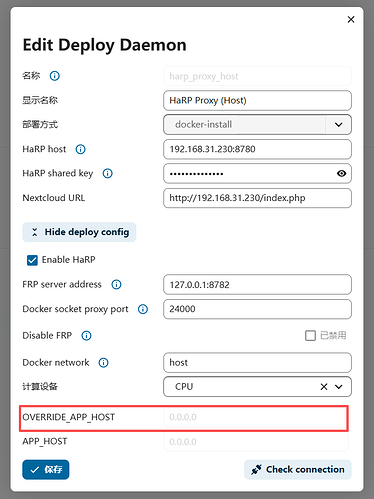

no, all in my own network

Summary of the issue you are facing:

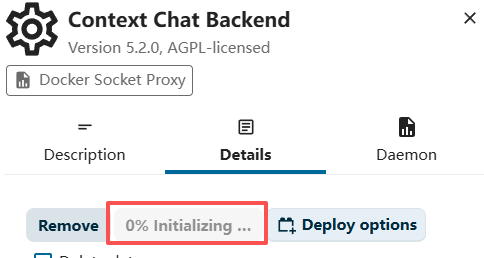

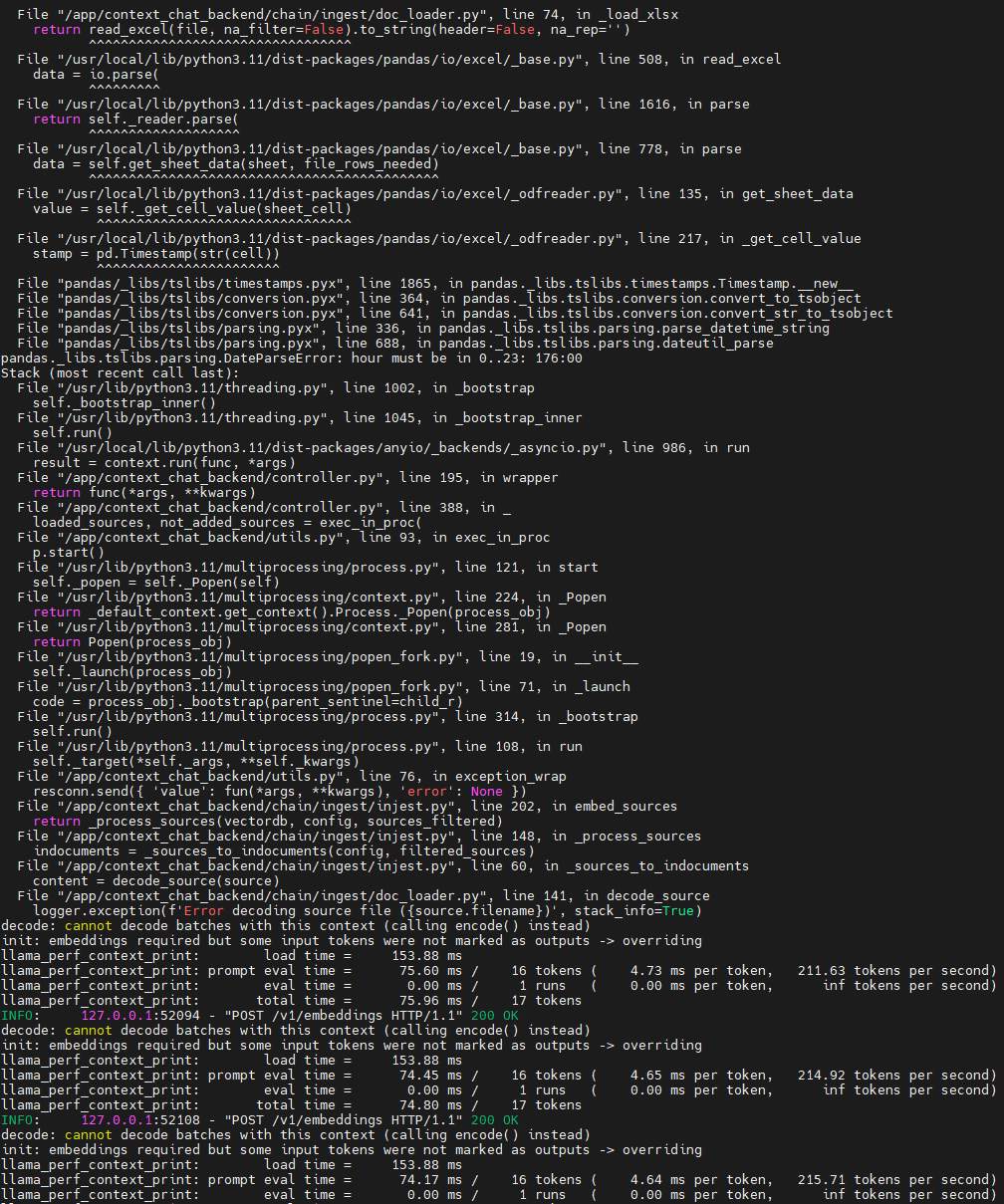

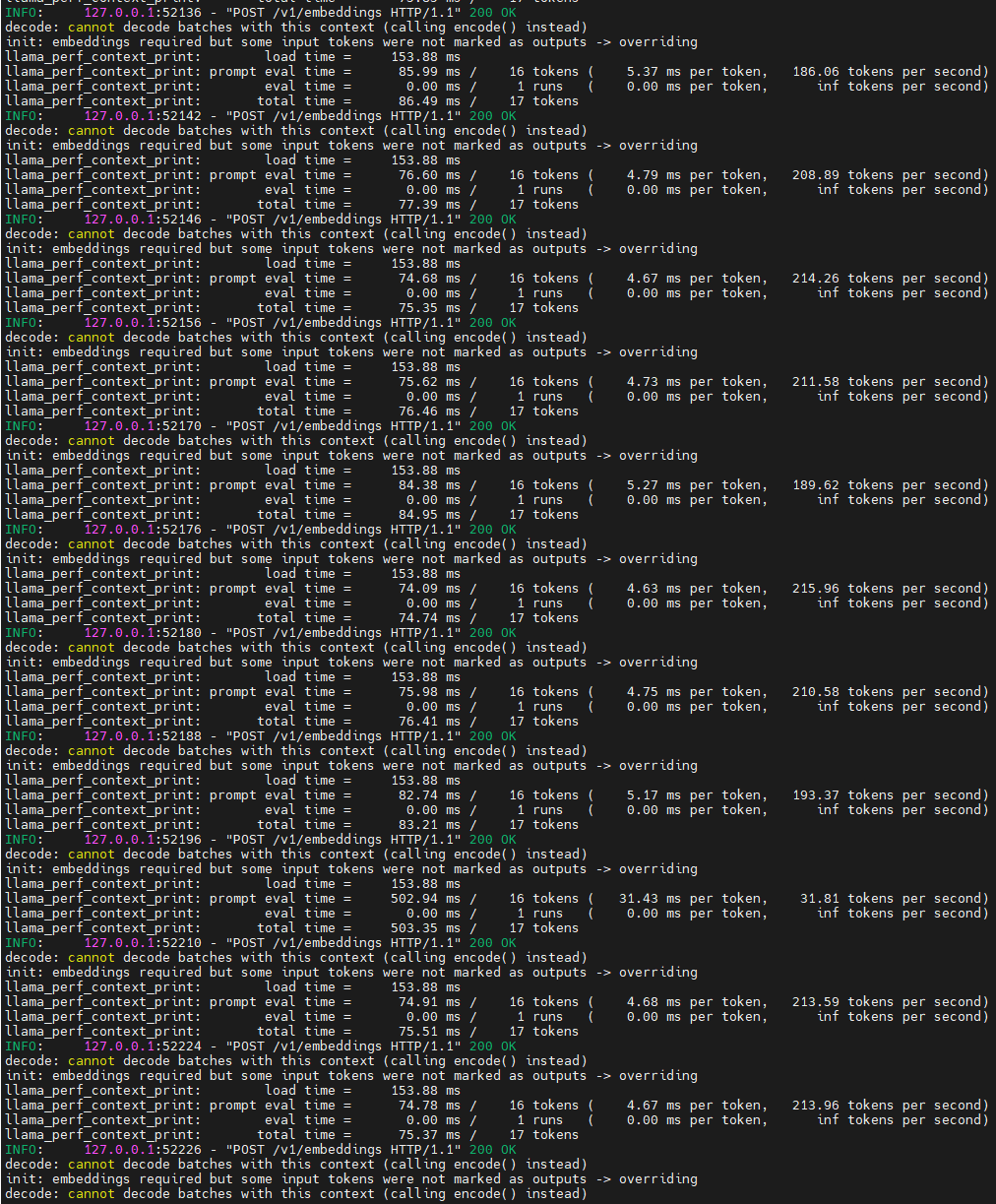

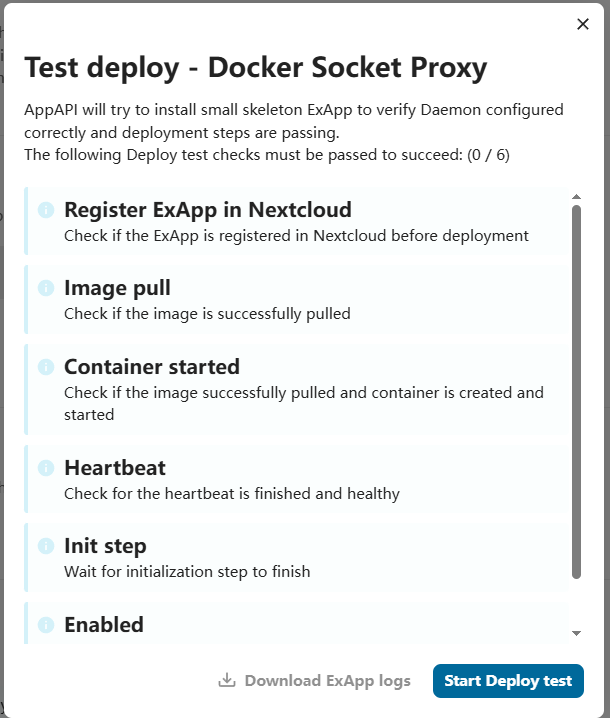

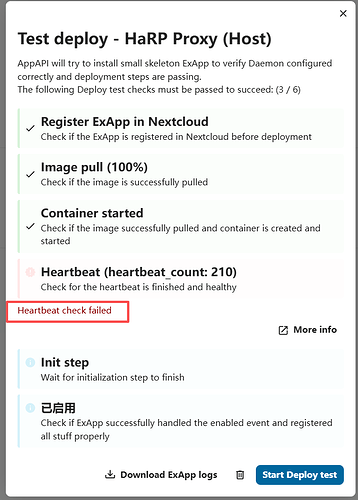

I want enable nextcloud AI assistant for context_chat, and I was read the offical method like: https://docs.nextcloud.com/server/latest/admin_manual/ai/app_context_chat.html , everything looks good,but when I check the status of “Context chat Backend“ in Active apps and it show me 0% initializing….

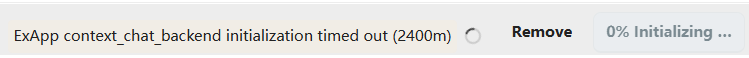

After maybe 40mins the status change to “Exapp context_caht_backend initialization time out (2400m)“

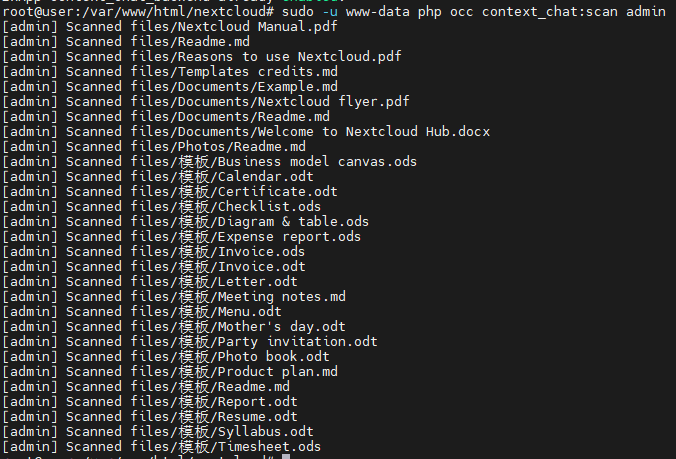

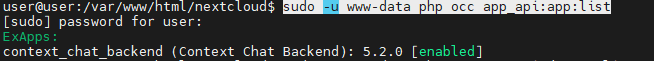

But when this time I try to scan my folder use this command “sudo -u www-data php occ context_chat:scan admin”, its show me works fine, everything can index.

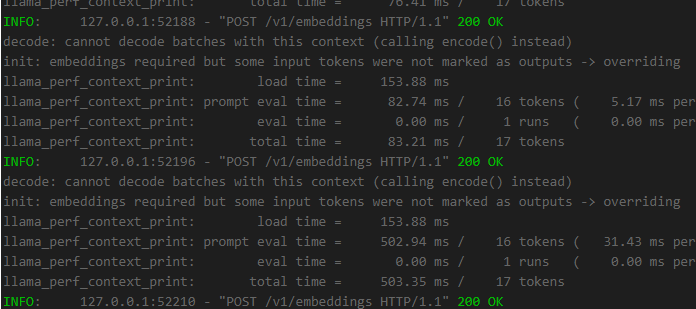

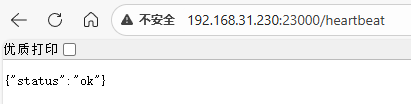

Also the status of Context chat backend container in Docker is running normally like this

I’ve been stuck on this for over a week. I’ve searched everywhere and even asked various AI tools, but nothing has worked. Can anyone help me out? Many many thanks!

System logging infomation:

A TaskProcessing context_chat:context_chat task with id 27 failed with the following message: Error received from Context Chat Backend (ExApp) with status code 503: unknown error

RuntimeException

Error received from Context Chat Backend (ExApp) with status code 503: unknown error