Try SyncThing. FOSS and works well.

Ok I note to try it

Thanks to everyone who’s taken an interest in this, with master-master not supported I don’t see a way of expanding this beyond a local network PoC and so will spin it all down.

What I’ve learned:

-

SyncThing is super reliable, handles thousands upon thousands of files and has the flexibility to be used in a usecase such as this; full webapp replication between several servers without any fuss however:

-

Feasibility in a distributed deployment model where latency and potentially flaky connections wasn’t tested thoroughly, and while the version management was spot on at picking up on out of sync files, it would need far more testing before being even remotely considered for a larger deployment

-

Load on the server can be excessive if left setup in a default state, however intentionally reducing the cap for the processes will obviously impact sync speed and reliability

-

-

Data/app replication between sites is not enough if you have multiple nodes sharing a single database

-

The first few attempts at a NC upgrade for example led to issues with every other node once the first had a) updated itself and b) made changes to the database.

- This may be rectified by undertaking upgrades on each node individually but stopping at the DB step, opting to only run that on the one, I didn’t test this though.

-

I think a common assumption is you only need to replicate /data, however what happens if an admin adds an app on one node? It doesn’t show up on the others. Same for themes.

-

-

Galera is incredible

-

The way it instantly replicates, fails over and recovers, particularly combined with HAProxy which can instantly see when a DB is down and divert, is so silky smooth I couldn’t believe it.

-

Although a powercut entirely wiped Galera out and I had to build it again, this wouldn’t happen in a distributed scenario… despite the cluster failure I was still able to extract the database and start again with little fuss anyway.

-

The master-master configuration is not compatible with NC, so at best you’ll have a master-slave(n) configuration, where all nodes have to write to the one master no matter where in the world it might be located. Another solution for multimaster is needed in order for nodes to be able to work seamlessly as if they’re the primary at all times.

-

-

Remote session storage is a thing, and NC needs it if using multiple nodes behind a floating FQDN

-

Otherwise refreshing the page could take you to another node and your session would be nonexistent. Redis was a piece of cake to setup on a dedicated node (though it could also live on an existing node) and handled the sessions fine.

-

It doesn’t seem to scale well though, with documentation suggesting VPN or tunneling to gain access to each Redis node in a Redis “cluster” (not a real cluster) as authentication is plaintext or nothing, and that’s bad if you’re considering publishing it to the internet for nodes to connect to.

-

-

When working with multiple nodes, timing is everything.

-

I undertook upgrades that synced across all nodes without any further input past kicking it off on the first node; however don’t ever expect to be able to take the node out of maintenance mode in a full-sync environment until all nodes have successfully synced, otherwise some nodes will sit in a broken state until complete

-

Upgrading/installing apps/other sync related stuff takes quite a bit longer, but status pages of SyncThing or another distributed storage/sync solution will keep you updated on sync progress

-

-

The failover game must be strong

- By default HAProxy won’t check nodes quickly enough for my liking, meaning if a node suddenly fails and I refresh, it may result in an error. I found the following backend setting resulted in faster checks and was more ruthless about when a node comes back up (requires 2 successful responses to come back into the cluster, but only on fail to drop out):

server web1 10.10.20.1:80 check fall 1 rise 2

In summary, it was a good experiment and I learned a few things. Although I faced no issue with Galera in master-master I understand it could lead to problems down the road and it’s therefore not something I’d invest any more time into. I will however use some of the things I learned here to improve other things I manage and perhaps will be able to revisit this in the future if Global Scale flops (![]() jokes)

jokes)

If anyone does wish to have a poke around, check performance and whathaveyou, by all means feel free to do so for the rest of today, this evening it’s all being destroyed ![]()

Thanks particularly to @LukasReschke for your advice!

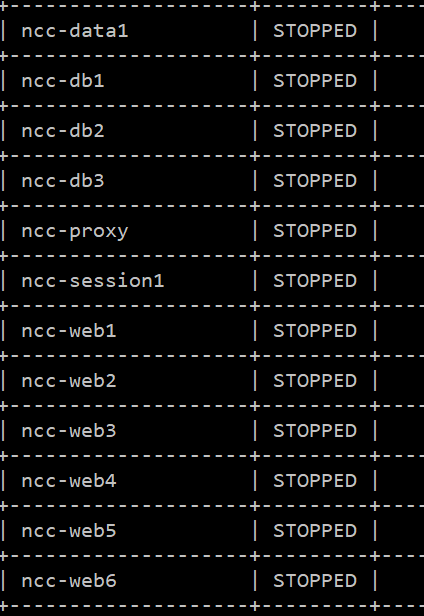

Edit: All now shut down.

Wrote it up in a bit more detail here:

Wow, very very useful stuff here, many thanks @JasonBayton

I’m working with a NC12 installation with 40 users not very active.

At the moment I have a single instance of NC+DB+Redis but the Virtual Machines involved (3) are already clustered. Indeed I have the following already in-place:

- MariaDB 10.1 galera master-master (2 nodes + 1 arbiter)

- GlusterFS, 2 nodes

- 2 HAproxy with floating IP, all configured as active-backup

I’m not interested on balancing, but only on the failover features: I need to shutdown a node without users noticing it.

I have a couple of questions:

- (because of the ‘READ-COMMITTED’ ) Am I in a dangerous situation even with 1 NC instance pointing to my master-master clustered DB through HAProxy in an active-backup fashion (all queries goes to the active DB, the secondary master is not used until the first one goes down)?

- Having glusterfs in place, adding a second NC instance (application server) with the same gluster volume for the entire nextcloud webroot is feasible?

- Redis: my nodes are on private network, do you see any issue using a clustered redis here?

Many thanks

Yes. You’ll need to migrate away from this ASAP.[quote=“aventrax, post:25, topic:12863”]

2) Having glusterfs in place, adding a second NC instance (application server) with the same gluster volume for the entire nextcloud webroot is feasible?

[/quote]

Worked for me perfectly well over a few-week period. It made the most sense given the nodes share the same database, why shouldn’t they all act like one server? However, longer-term testing (and bullet-proof backups) is absolutely required. What I did was a PoC, not a recommendation.

Nope, in fact that seems to be how it was designed. Optionally make use of the authentication requirement to deter other users on the LAN from snooping, even if it is stored plaintext it can be a deterrent.

Ok. I’m again on a single MariaDB instance.

I’m now wondering how to reach my goal: being able to shutting down the master database with nobody noticing it.

I searched and I haven’t found anything like galera master-slave setup, it seems that galera is master-master ONLY, am I right?

Searching another master-slave setup I found a lot of documentation but I’m still asking myself what’s the simplest master-slave setup with an automatic failover.

It seems to me that MariaDB have a tool called “replication manager” that can be installed on a third host and can provide a master switchover or failover. Not bad, but not enought as I understood.

The remaining piece of my puzzle is something that can detect which node has the master role and point all the writes to it, and I thought it can be maxscale with a floating IP running with keepalived.

It seems to me “a bit” complex, I had configured a galera master-master setup in minutes, and for a less “advanced” master-slave setup it seems weird to have all this software required (replication manager, maxscale, keepalived…), isn’t it?

Am I missing something?

No indeed, the NC docs repeatedly talk about Galera but in a master-slave capacity for which there doesn’t appear to be a valid configuration option.

Master-slave with failover is equally not well documented from what I can see online, though the tools you mention will help.

@LukasReschke @nickvergessen @jospoortvliet can any of you shed light on this? Why is Galera mentioned in the docs if master-master seems to be the only valid config and NC doesn’t support it? Will NC support it in the future? [quote=“aventrax, post:27, topic:12863”]

Am I missing something?

[/quote]

No, Galera is frighteningly simple to set up.

I believe a working setup would require “a SQL-Proxy for read/write splitting” - I know the TU berlin has that as this is their description of their setup:

tubIT employs a setup with F5 Big IP Load Balancers ahead of a number of web application servers. Data is stored on a GPFS storage system. The database is on a Galera MySQL cluster using a SQL-Proxy for read/write splitting, in LXD containers managed with OpenStack. File locking and session management is provided using Redis, also on LXD managed with OpenStack. Authentication is handled through LDAP and Kerberos.

The TU currently uses 4 application servers running a LNMP (Linux/NGINX/MySQL/PHP) stack with PHP 5.6 on CentOS 7.3. Further application servers are running the DFN-Cloud instances. A locally replicated LDAP instance runs on each of the application servers. Each server has about 16 virtual CPU cores with 18GB RAM.

The Galera cluster employs 3 container with 32-core cpus and 128GB ram each. Its performance is very critical to the performance of the overall cloud. To serve more incoming queries the number of instances can be increased, however every new instance increases the resources required to synchronize within the cluster, limiting scalability.

The GPFS cluster is load balanced between four GSS nodes to improve response times and provide failover.

(text from a case study I’m working on, hopefully coming out in a week or two)

I put it between “” as I have no idea what I’m even saying but perhaps Jason does ![]()

The SSL proxy is fine and I understand why you’d use one for a clustered system (the deployment recommendations also suggest using one). However there again Galera is mentioned. The only Galera I’ve seen is master-master which apparently both is and isn’t supported due to:

Then:

Master-Slave doesn’t appear to be available for Galera, but then also:

Emphasis mine. If Galera is master-master only, what are the docs referring to?

Honestly, I’m lost, I don’t know the details here. @MorrisJobke I know you’re on holiday but when you’re back perhaps you can enlighten Jason

Note that this whole Galera stuff is of course for larger installations so our docs might not be super up to date - we have some internal docs we share with customers.

Can I get those please? I’d love to get a PoC v.2 up and running on solid foundations. I can sign an NDA (on the docs, obviously methods would be revealed on a documented build) if required.

I honestly don’t know exactly where they are or even if it’s mostly in people’s head - I’m entirely not involved in that stuff, I just know we help customers with this and assume it is written down. Really would need Morris or maybe @MariusBluem to chip in

@MorrisJobke and @MariusBluem would you guys like to chip in please?

For the read-write split you need a MaxScale proxy  And the galera cluster needs to be in master-slave mode with the masters receiving the writes and the slave(s) the reads.

And the galera cluster needs to be in master-slave mode with the masters receiving the writes and the slave(s) the reads.

Does this help you?

No sorry  Galera is master-master and explicitly states that in the (MariaDB) Galera docs, so I don’t understand where the master-slave piece comes into it.

Galera is master-master and explicitly states that in the (MariaDB) Galera docs, so I don’t understand where the master-slave piece comes into it.

Ah right - it is master-master, but we need to make it a somehow master-slave (slave as in “handles only reads”) by the MaxScale proxy.

OK, so does this no longer present an issue then? I’d assume it would:

A multi-master setup with Galera cluster is not supported, because we require READ-COMMITTED as transaction isolation level. Galera doesn’t support this with a master-master replication which will lead to deadlocks during uploads of multiple files into one directory for example.

I’m following this thread with interest. The question about READ-COMMITTED must be clarified

It still holds, but as you only do writes to one node it will not happen.  If you use Galera without the proxy in front and do writes to all nodes it will fail because of random deadlocks. Splitting reads and writes to different nodes (or better: the writes to a single node) this will not happen anymore. The sentence is a bit badly written.

If you use Galera without the proxy in front and do writes to all nodes it will fail because of random deadlocks. Splitting reads and writes to different nodes (or better: the writes to a single node) this will not happen anymore. The sentence is a bit badly written.