One idea left is that dns resolution does not work inside the mastercontainer… Can you try to pull the latest mastercontainer and recreate it? That should reveal the issue upon startup…

Okay, seems like this is probably not an issue here.

Sorry don’t have any clue what the problem could be.

It works on my side…

DNS inside the mastercontainer seems to work just fine:

$ docker exec -t -i <container id> /bin/bash

root@<container id>:/var/www/docker-aio# python3

Python 3.9.2 (default, Feb 28 2021, 17:03:44)

[GCC 10.2.1 20210110] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import socket

>>> print(socket.gethostbyname('<my.dyndns.domain>'))

xxx.xxx.xxx.xxx <---- my public ip at which the dyndns account is pointing to

For troubleshooting I messed around a bit with the ConfigurationManager.php and modified line 234 to highjack the error message for some more verbosity about the content of the relevant variables:

throw new InvalidSettingConfigurationException("Response: ".$response." --- InstanceID: ".$instanceID." --- Protocol: ".$protocol." --- Domain: ".$domain." --- Port: ".$port." --- Domain does not point to this server or reverse proxy not configured correctly.");

As result I receive the following error when trying to register my dyndns domain:

Response: <html> <head><title>400 The plain HTTP request was sent to HTTPS port</title></head> <body> <center><h1>400 Bad Request</h1></center> <center>The plain HTTP request was sent to HTTPS port</center> <hr><center>nginx</center> </body> </html> --- InstanceID: 227bd952xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxfe000596b4 --- Protocol: http:// --- Domain: <my.dyndns.domain> --- Port: 443 --- Domain does not point to this server or reverse proxy not configured correctly.

Does that make any sense for you?

I tried hardcoding the protocol to https://, but in that case I don’t get any response at all (seems to be empty)

Response: --- InstanceID: 227bd952xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxfe000596b4 --- Protocol: https:// --- Domain: nullsec.root.sx --- Port: 443 --- Domain does not point to this server or reverse proxy not configured correctly.

It seems the missing piece of the puzzle was setting up a split horizon DNS in mypfsense (Services > DNS Resolver > Host Overrides) in order to resolve <my.dyndns.domain> to the local LAN IP if called from within my network. Since this also includes the name resolution on the onprem virtual machine itself, this seems to solved the issue with the failing check.

In short:

wrong DNS resolution on docker machine behind NAT firewall:

$ ping <my.dyndns.domain>

PING <my.dyndns.domain> (xxx.xxx.xxx.xxx) 56(84) bytes of data.

Where xxx.xxx.xxx.xxx is the public IP of the firewall

right DNS resolution on docker machine behind NAT Firewall:

$ ping <my.dyndns.domain>

PING <my.dyndns.domain> (192.168.xxx.xxx) 56(84) bytes of data.

Where 192.168.xxx.xxx is the internal network IP

Maybe it’s worth to put a remark for this in the official installation documentation, as a second scenario besides the reverse proxy ![]()

Thanks for the idea but honestly to me does the issue sound like it is very specific to your own network. For me it works without configuring any of this manually but I also don’t have pfsense in place…

However, if you open a PR I’ll consider merging it ![]()

Yes, you are probably right with the issue being specific to my pfsense / my setup.

May I ask what happens when you access your dyndns URL in browser from within your network? Are you forwarded to your nextcloud as expected?

For me without the split horizon DNS in place, the URL is translated to the external IP. And when I try to access it from an internal System (via DNS) in browser, I’ll get a spoofing warning from pfsense, which is probably the thing that confuses the nextcloud AIO domain check.

So the port-forwarding is ignored when connecting from internal system via the external IP and pfsense throws an alert because it doesn’t know why the DNS is pointing to it and thinks something fishy is going on.

If I access the external IP directly (without DNS) I’ll be presented with the pfsense login page, (although it of course is not available from outside my network) .

Most probably other routers/firewalls behave completely different here …

Honestly I still don’t understand how your internal network works…

In my setup AIO is installed on a Intel NUC that is running Ubuntu 20.04. Port 443 and 8080 is open in the NUCs firewall. Then I port-forward port 443 from my router to port 443 of the NUC and configure one of my domains so that it points to the external ip-address of my network. Now I access the AIO interface locally via port 8080 and enter the configured domain. The domain gets accepted because the domain points towards my network, port 443 is open and it is able to connect to the domaincheck container correctly. I can start Nextcloud now and automatically have a valid certificate and correctly configured Nextcloud with all addons, etc.

We’ll improve the domain check by making it more verbose and improve debuggin with the next release. See make the domain check more verbose and allow to debug it better by szaimen · Pull Request #680 · nextcloud/all-in-one · GitHub

I’ll post my question here, as it is very similar to the above ones.

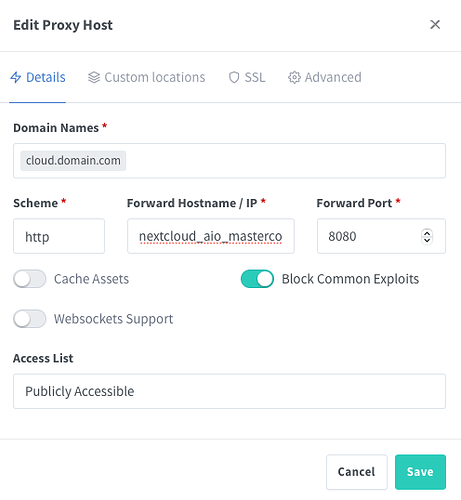

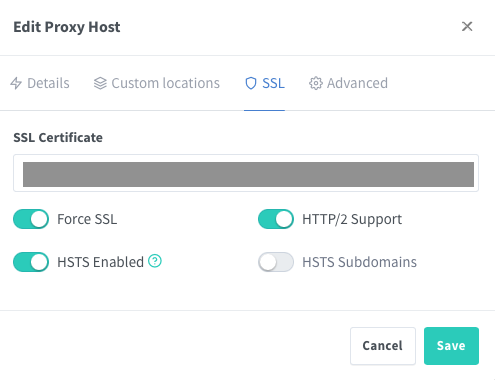

- I deployed NC-AiO:latest with your docker-compose on a host with Portainer.

- APACHE_Port=11000 is set in docker-compose.yml

- I have Nginx Proxy Manager for reverse proxy and SSL handling setup and running on the same host, all three share a network.

- Both container are running

- Port 80, 443 are open in the firewall and point to the Nginx reverse proxy

- Nginx routes cloud.domain.com to nextcloud_aio_mastercontainer:8080 and I have a valid certificate for domain and subdomain.

I can start the setup process, but NC gives the issue that the domain does not point to the server.

I think I don’t understand what the domaincheck-container does and why I need it. I assumed, because I already have a reverse proxy running that handles SSL, NC doesn’t need to do that – is that wrong?

Can you point me in the right direction @szaimen?

Hi, please follow all-in-one/reverse-proxy.md at main · nextcloud/all-in-one · GitHub. There is also an example for Nginx Proxy Manager.

Sorry that I did not mention this:

I followed the instructions exactly and it did not work. Safari shows “Can’t establish secure connection”.

I was only able to enter setup with the shown configuration.

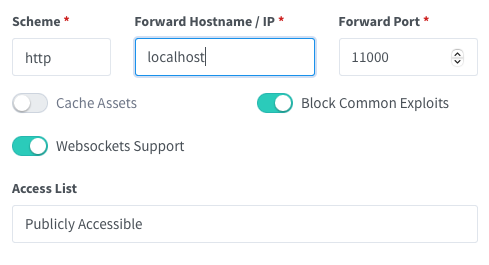

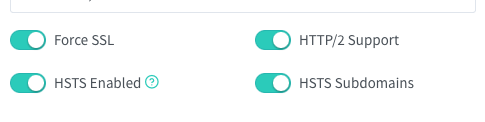

- The Docker host is running on the same machine as Nginx Proxy Manager

- All three containers share a network (that works with other containers)

- I tried:

– http://localhost:11000

– http://ip-to-docker-host:11000

– http://nc-mastercontainer-id:11000

– http://nc-domaincheckcontainer-id:11000

The only combinations that load a page are

– https://nc-mastercontainer-id:8080

– https://ip-to-docker-host:8080

This is like the guide you posted and it doesn’t work:

I see. Then probably nginx proxy manager does not use the network_mode: host option? If so you need to use the ip-address instead of localhost here that you can get by running ip a | grep "scope global" | head -1 | awk '{print $2}' | sed 's|/.*||' on the server.

All of this is btw perfectly documented in the reverse proxy documentation.

I tried network_mode: host as well as the host IP (verified with ip a […]).

I have over 30 proxy hosts and streams setup in this instance without issues, about half deployed on the same docker host. But I still don’t understand what the purpose of the domaincheck-container is and why I’m forced to use SSL. Isn’t it possible to deploy NC and leave SSL to my own reverse proxy?

This is exactly the idea here. However if nothing works even if you use the network_mode: host option for the nginx proxy manager container, you may run into an issue here with the domain validation. We will allow to disable it with the next release: allow to skip the domain validation and add documentation for cloud… by szaimen · Pull Request #873 · nextcloud/all-in-one · GitHub

Another option that is already possible is this: all-in-one/manual-install at main · nextcloud/all-in-one · GitHub

This topic was automatically closed 90 days after the last reply. New replies are no longer allowed.